Frontier labs go enterprise: the next phase of software and infrastructure modernization

.webp)

There is a wave of application rebuilding and replacement going on. At Eli5, we review articles about software modernization every week to find real value for CTOs, PMs, and POs who have to deal with the modernization of legacy software.

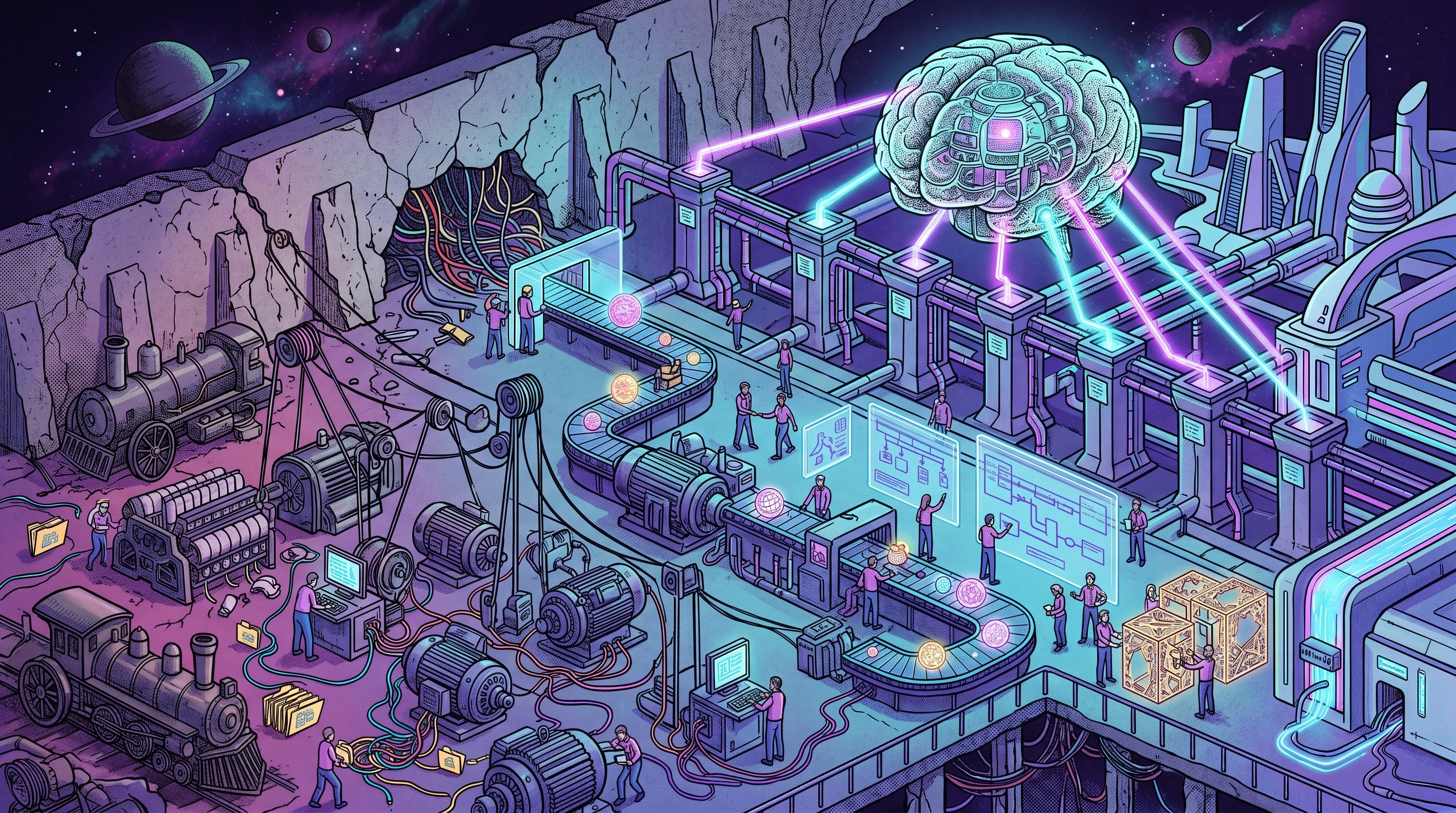

This week we reviewed two announcements that landed within seven days of each other and that, taken together, signal a real shift in how enterprise AI is going to be delivered. On May 4th, Anthropic announced a new enterprise AI services company together with Blackstone, Hellman & Friedman and Goldman Sachs, with a stated focus on mid-sized businesses like community banks, regional health systems and multi-site healthcare groups. Seven days later, on May 11th, OpenAI launched its own Deployment Company, backed by TPG, Bain Capital, Brookfield, Goldman Sachs and a long list of others, with more than 4 billion in initial funding and the acquisition of an applied AI firm called Tomoro to bring in roughly 150 forward deployed engineers from day one. Both announcements say the same thing in different words: the model itself is no longer the bottleneck, the deployment inside the organization is.

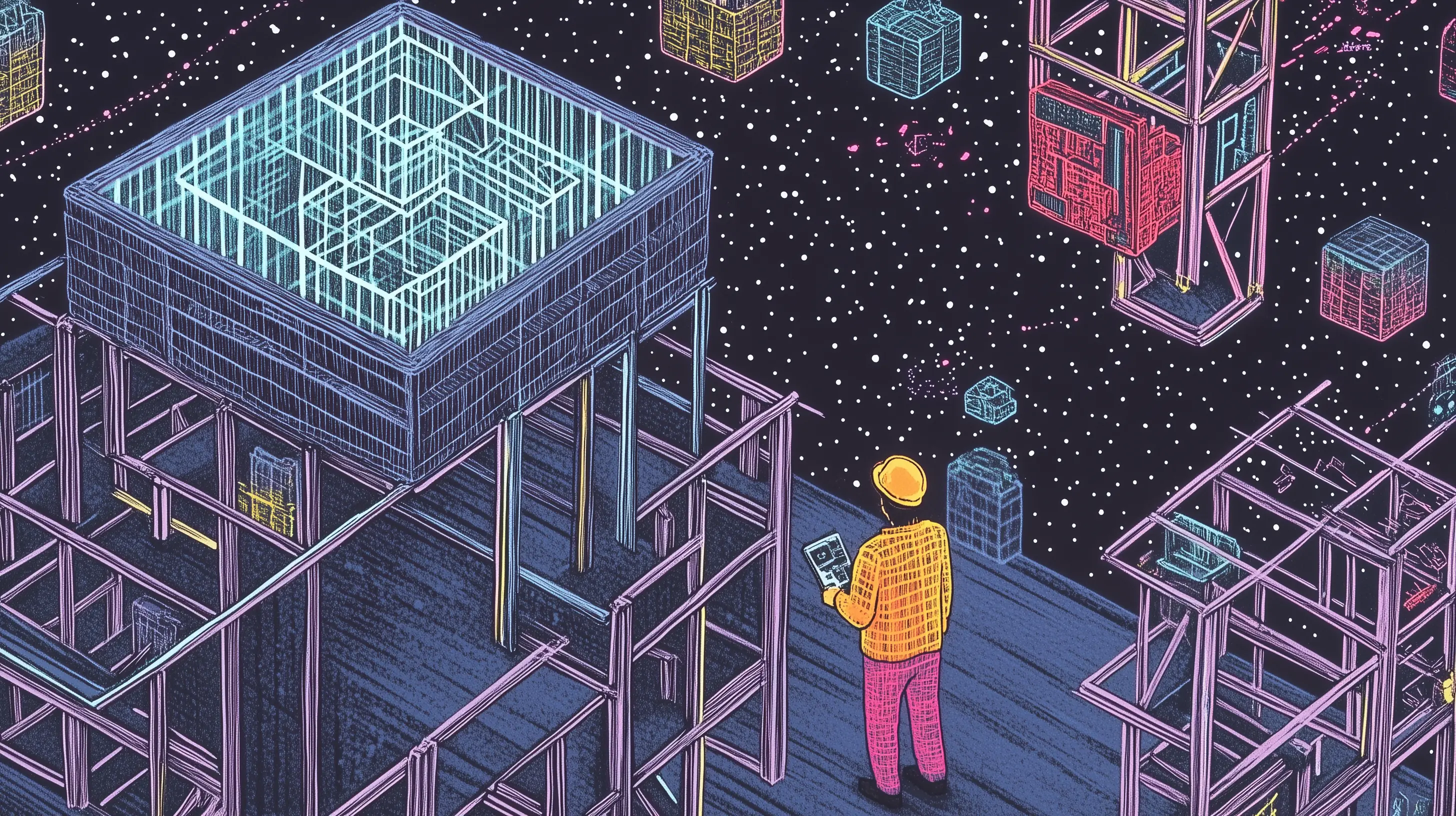

For anyone working on software modernization, these announcements confirm what we have been saying for months. The real wall in enterprise AI is the state of the systems and workflows around the model, not the intelligence of the model itself. Legacy databases, undocumented business logic, brittle integrations and outdated processes stop most AI initiatives long before they reach production. OpenAI and Anthropic are now both betting that the way through that wall is to put their own engineers inside the customer and rebuild the workflow from there.

Frontier labs go enterprise: the next phase of software and infrastructure modernization

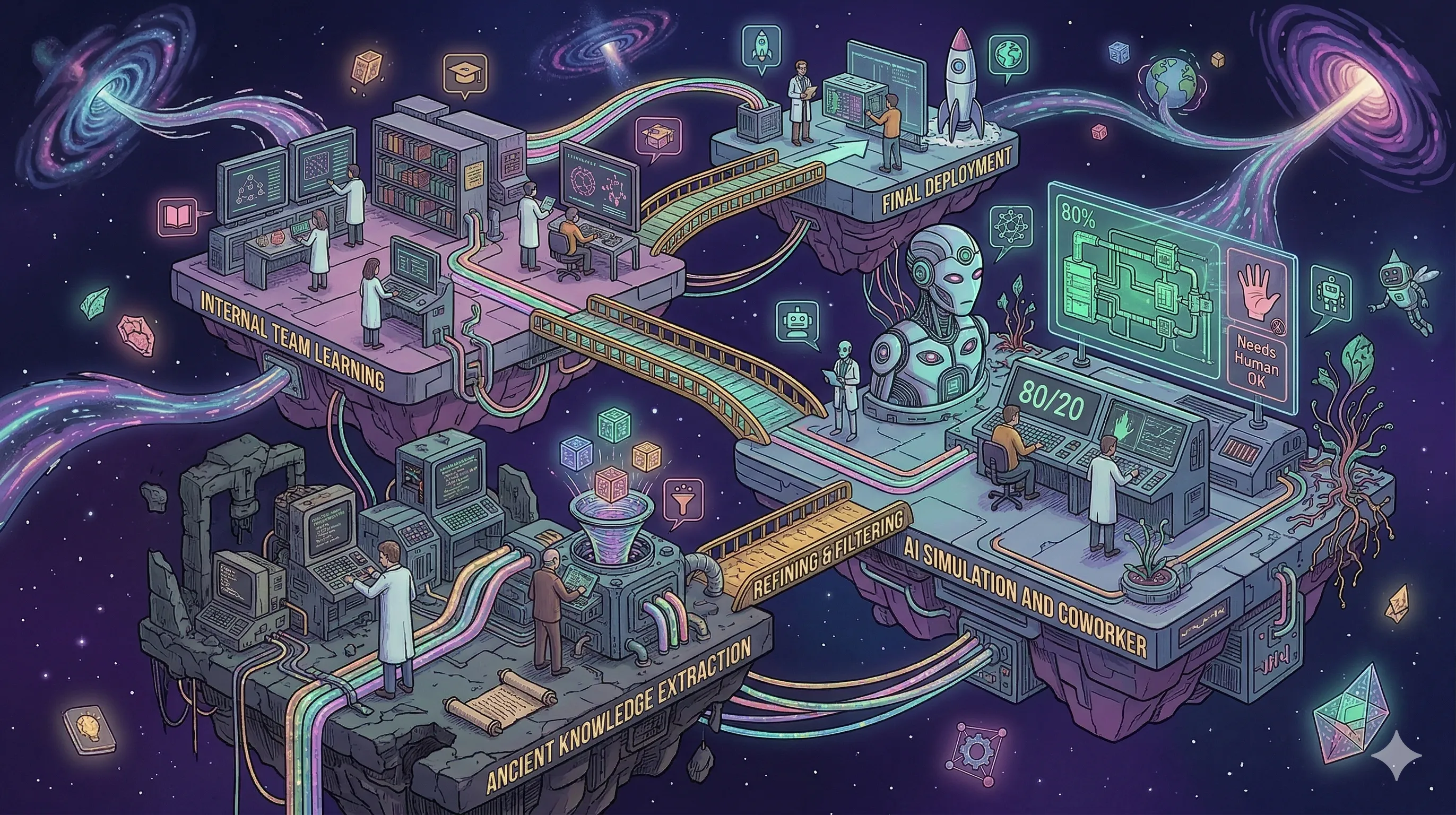

Abstract. Within seven days of each other, the two largest frontier model providers announced new enterprise AI services companies with strikingly similar structures. On May 4th, Anthropic, together with Blackstone, Hellman & Friedman and Goldman Sachs, announced a new AI services company aimed at mid-sized organizations such as community banks, regional health systems and multi-site healthcare groups. Applied AI engineers from Anthropic will work alongside the firm's own engineering team to identify where Claude fits, build custom solutions and run them long term. The company also joins the Claude Partner Network alongside Accenture, Deloitte and PwC. Seven days later, OpenAI announced the OpenAI Deployment Company, a majority-owned standalone business unit backed by 19 investment firms, consultancies and system integrators, led by TPG with Advent, Bain Capital and Brookfield as co-leads. It launches with over $4 billion in initial investment and is acquiring applied AI firm Tomoro to bring in roughly 150 Forward Deployed Engineers from day one. The stated goal of both ventures is the same: embed engineers inside customers, redesign workflows around frontier AI, and turn model capabilities into durable operating systems.

Review and insights

Two of the biggest frontier model providers in the world made the same move in the same week, with different partners but with strikingly similar structures. Here are the core themes that came up in our conversation.

Going for the bigger piece of the pie. Enterprise companies spend roughly six times as much on services, integration and maintenance as they do on the underlying software licenses. That ratio is exactly why IBM, SAP and Salesforce have always relied on consulting and system integration partners to make their software actually work inside an organization. What OpenAI and Anthropic are doing now is going after that share of the revenue themselves. They have the technology but they were missing the service muscle. They are closing that gap directly, OpenAI by buying it through the Tomoro acquisition, Anthropic by partnering with private equity and using Applied AI staff alongside its existing Claude Partner Network.

The Palantir playbook. This pattern has been around for a while. Palantir built its business by placing forward deployed engineers inside customers, writing the playbook on how to use the software while billing for the people who write it. You earn from both sides of the table at the same time. OpenAI and Anthropic are running their own version of that model, with deeper pockets and a much bigger underlying product.

Surprising timing, logical motivation. Both companies looked at the gap between what their models can do and what is actually getting deployed inside organizations, and concluded that the gap will close too slowly on its own. Models are improving faster than enterprises can absorb them. For a company like OpenAI, which has to defend a valuation that assumes massive enterprise adoption, waiting for the traditional system integrators to catch up is too risky. Going in directly speeds up the institutional AI adoption curve.

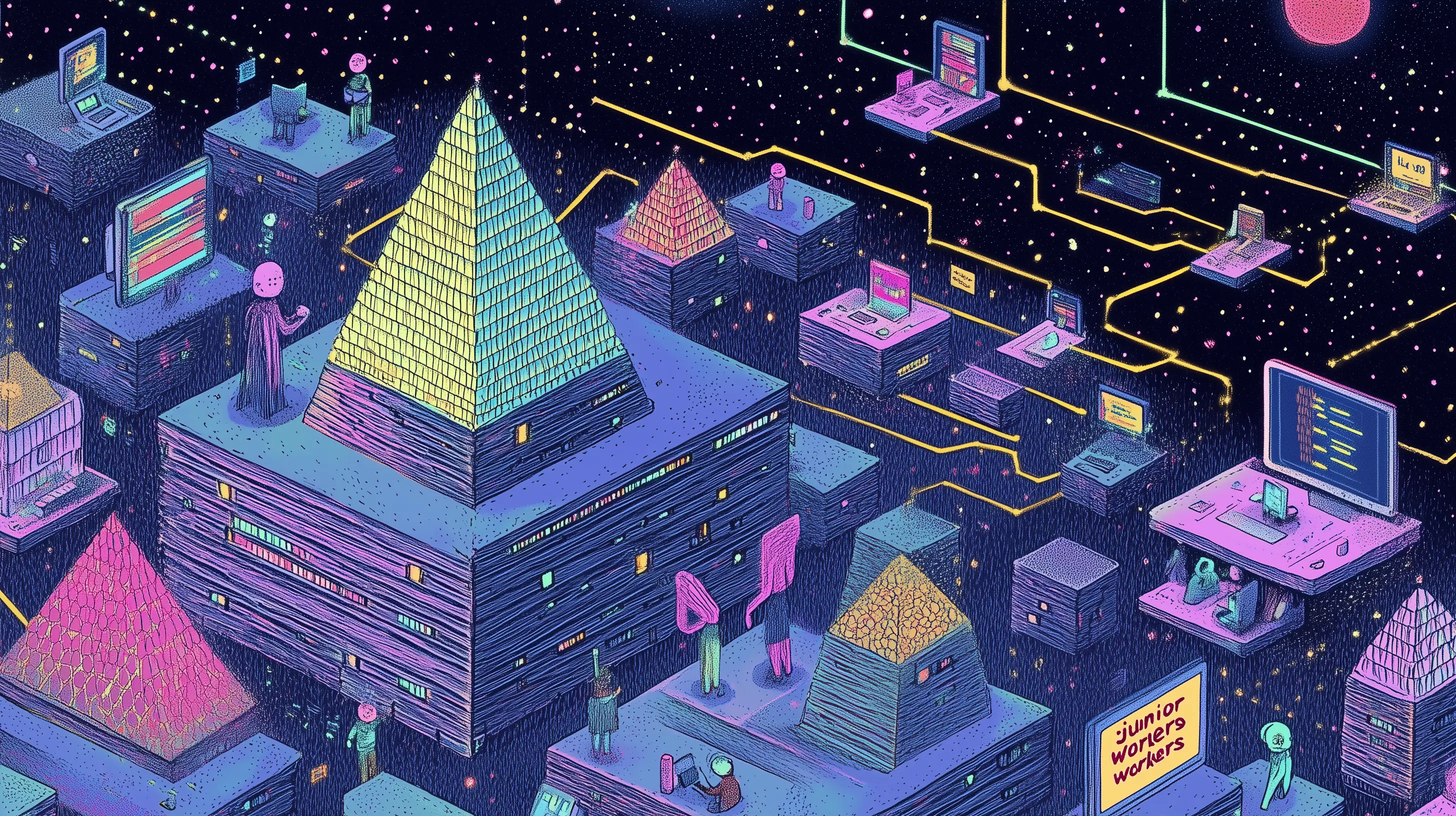

The A-team problem. The first hundred or two hundred forward deployed engineers will probably do remarkable work. They will be the A-team of the business, sitting inside the highest-value clients. Once you scale past that, the engagement starts to look like a normal services business with juniors, mid-levels, managers, sales people, and the quality drift that comes with all of those. Every services company we know runs into this, including our own. Keeping A-team quality across thousands of engagements is mathematically very hard.

Vendor lock-in by architecture. Once the first forward deployed engineer sits down with your team and starts designing the architecture, they are also setting the defaults for which model, which tools, which orchestration layer and which data integrations get used. From a business perspective for OpenAI and Anthropic this is obviously the point. Lock-in is part of the strategy. From our perspective at Eli5, where we deliberately stay independent and design for the ability to swap models and orchestration layers, this is a problemCTOs need to see coming before they sign anything.

The data goldmine question. When a model provider also becomes the service provider, they gain direct access to the customer's internal data through their own engineers. We do not yet know what the contractual boundaries will be. Internal enterprise data is the most valuable training data on earth precisely because it is not public. Even if the agreements are clean, the proximity is closer than it has ever been.

Subsidized pricing to win the market. OpenAI has reportedly promised investors a 17% annual return from the start, which is unusual for an investment of this scale and stage. To get there at speed, expect aggressive pricing. The competition for these deployment companies comes from offshore system integration on one side and the big consultancies on the other. Both can be undercut if you are willing to subsidize the engagement with model revenue, multi-year contracts, or both.

Modernizing the engine instead of redesigning the factory. What stands out is what these announcements do not contain. There is very little about reinventing how a company is structured around AI. The framing is almost entirely about embedding frontier AI into the workflows that already exist. That is exactly the part of the modernization conversation we keep coming back to. Putting a modern engine in a legacy chassis rarely works. Workflows, data architecture and team design need to be rebuilt before any of this delivers durable ROI. The role of the big consultancies in these consortia, Bain, McKinsey, Capgemini, Accenture, Deloitte, PwC, will almost certainly be to handle that organizational redesign work while the model-provider engineers handle the technical layer.

The European angle. Europe does not have a frontier LLM at the same level as GPT or Claude. Mistral is doing serious work but is still behind. The big consultancies in this story all have European branches, but their center of gravity sits in the US. The ecosystem for capital, risk appetite and uniform labor rules that made these announcements possible in the first place is fundamentally a US ecosystem. That puts European mid-sized companies in a particular spot. They match the target market Anthropic named, while the providers, the engineers and the operating model are all imported.

Concluding remarks

The move is surprising in its timing and logical in its motivation. The frontier model companies looked at the institutional AI adoption curve, decided it was moving too slowly, and stepped into the services business themselves to speed it up. They get a bigger share of the customer wallet, they shape the architecture from inside, and they make sure the workflows that get rebuilt are rebuilt around their own models. From a business standpoint, the logic is strong. From a CTO/CEO standpoint, it changes the calculation on every AI engagement that starts from this point forward.

The hard part underneath all of this has not changed. Before any frontier model delivers value inside an organization, somebody still has to deal with the legacy systems, the data fragmentation and the workflows that were never designed to work with intelligent software in the first place. That work does not get faster because OpenAI sends an engineer. It is the same work it has always been. The question for European mid-sized companies is whether they want that work done by someone tied to one model provider, or by someone independent who can pick the right tools for the job.

What this means for you as a CTO or PO. If you are leading a mid-sized European company and you are watching these announcements, the worst move is to do nothing. Picking a side too early is almost as bad. Get independent advice in the building. Understand your own stack first. Know which workflows are actually ready to be redesigned around AI and which ones need modernization work first. Then decide if you pick a team or not....

Full video episode: Frontier labs go enterprise: the next phase of software and infrastructure modernization

The first step to start your modernization journey

Software modernization and architectural rebuilds lie at the heart of Eli5. We solve complexity to deliver direct business value by focusing on pragmatic, cloud-native transitions.

Before you decide whether to wrap your legacy system, buy a new SaaS product, or use AI to build custom tools, you need total visibility into your current tech landscape.

Would you like to book a free brainstorm to discuss your legacy stack? It is the essential first step to turning your technical debt into a scalable, modular future.

.webp)

.webp)

.webp)

.webp)

.webp)

%20(1).webp)

.webp)

.webp)